From: https://towardsdatascience.com/cellular-automaton-and-deep-learning-2bf7c57139b3

Cellular Automatons (CA) are evolving grid-based computational systems which model complexity growth. In simpler words, cellular automatons are evolving patterns that are unidirectional and chaotic. The rules of evolution are very elementary and simple however, the evolution gives rise to highly complex structures and behaviour. CA have been proposed as models of how complexity grows in the physical chaotic system and how symmetry can emerge from simple laws. A parallel that I like to draw is to compare CA with Statistical Mechanics: The concept of temperature does not exist at a molecular/atomic level for gases. Temperature is not a property of a single molecule. However, when you put millions/billions of molecules in a box the dynamics and kinematics between the molecules give rise to Temperature. We call temperature an “emergent” property of the ensemble. CA behave in a similar way – they follow very simple local rules and a collection of CA gives rise to “emergent” symmetries and complex behaviour.

In this blog we will focus on the most famous example of a 2d CA known as “Game-of-Life” (GOL). This was discovered by the Conway in 1970s. Evolution of a cell depends on its neighbours. A cell has two states — dead (black) or alive (white). The automaton follows these simple rules,

- A cell with all dead neighbours dies because of loneliness.

- A cell with more than 3 live neighbours dies of starvation

- A dead cell with 3 or more live neighbours becomes alive because of reproduction

- A cell with 2 or 3 live neighbours remains in the same state — stasis.

We represent all these states in the following figure,

We let a 2d grid of CA evolve over time and obtain interesting structures. The initial starting point is typically random. Certain fixed starting point gives rise to specific structures. People have tried to classify the zoology of the structures that can appear. Let us see some examples of evolution with random initial states,

Sometimes (based on initial conditions) very interesting structures like the following emerge,

Wikipedia article on CA contains lots of examples of these complex structures. Notice that the above figure has a symmetry associated with it whereas the rules were local and had no symmetry. This is an example of “emergent” symmetry.

Enough talk. What has Deep Learning got to do with it?

Implementation

The fact that the rules are local (they only look at the neighbours) means that CA bears a great resemblance to the convolution operation. Motivated by this link proposed the following architecture as a CA model,

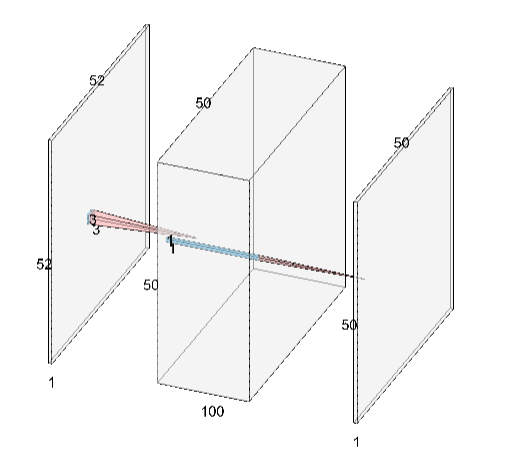

We start with a 50×50 image (this is our world grid) and perform a roll-over padding to make it 52×52. Roll-over padding means that we identify left-right and top-bottom edges, to make the whole grid continuous. The 52×52 image is fed into 100 3×3 filters. This number of the filter varies with the kind of CA you want to model. GOL can be simulated with at least 5 filters. Finally, we implement a 1×1 convolution to get a 50×50 image for the next time-step. We would like to emphasise that this network resembles a one-single time step evolution of CA. With just one layer of convolution, it is impossible to model arbitrary long time-step evolutions. To model, a CA one needs to keep reusing the network to generate n-step evolutions. Now, let us look at the implementation in TensorFlow,

We train the CA on one-step evolution on randomly generated initial states. The full- code for training and evaluating the model is given here.

Now let us look at various applications of CA in real-world situations.

Applications

- Error Correction.

CA can quickly learn from very few examples. A CA can be trained on images to remove impurities as a self-correcting grid. Some examples of image error corrections are,

2. One Way function:

The propagation of CA is not a bijective function hence given a state at a time step it is virtually impossible to find the initial configuration. The number of possible previous states grow exponentially. I experimented with both deep and wide conv-nets to map a backward function and could not find initial configurations. This suggests finding the inverse is a non-trivial task and maybe not possible at all.

3. ARC (Abstract Reasoning Challenge)

François Chollet, the creator of Keras recently put out a paper, on how to measure intelligence. He suggested plenty of tasks, involving different kinds of abstract solving techniques. Each task has very few (typically 2–3) training samples and a test sample. The goal is to actually learn the logic needed to solve the tasks instead of learning some kind of transformation. Neural Network-based methods are prone to overfitting due to very few train examples being available. The SOTA methods use Decision trees and have success in about 50/400 (so pretty abysmal). Let us see some examples of tasks,

As you can see the tasks are very different and require global physics, topology etc knowledge. Designing a single neural network that can generalize to all possible situation is almost an impossible task. CA-based methods are a proposed solution to the following tasks. Some of my rudimentary experiments give 20/400 success rate. Let me know in the comments below if you want me to use CA to solve ARC tasks.

References

- Code: Github

- Game of Life: https://en.wikipedia.org/wiki/Conway%27s_Game_of_Life

- CA as Conv-Net : https://arxiv.org/abs/1809.02942

- Measure of Intelligence: https://arxiv.org/abs/1911.01547